[ad_1]

Keeping up with an industry as fast-moving as AI is a tall order. So until an AI can do it for you, here’s a handy roundup of recent stories in the world of machine learning, along with notable research and experiments we didn’t cover on their own.

This week in AI, Microsoft unveiled a new standard PC keyboard layout with a “Copilot” key. You heard correctly — going forward, Windows machines will have a dedicated key for launching Microsoft’s AI-powered assistant Copilot, replacing the right Control key.

The move is meant, one imagines, to signal the seriousness of Microsoft’s investment in the race for consumer (and enterprise for that matter) AI dominance. It’s the first time Microsoft’s changed the Windows keyboard layout in ~30 years; laptops and keyboards with the Copilot key are scheduled to ship as soon as late February.

But is it all bluster? Do Windows users really want an AI shortcut — or Microsoft’s flavor of AI period?

Microsoft’s certainly made a show of injecting nearly all its products old and new with “Copilot” functionality. In flashy keynotes, slick demos and, now, an AI key, the company’s making its AI tech prominent — and betting on this to drive demand.

Demand isn’t a sure thing. But to be fair. a few vendors have managed to turn viral AI hits into successes. Look at OpenAI, the maker of ChatGPT, which reportedly topped $1.6 billion in annualized revenue toward the end of 2023. Generative art platform Midjourney is apparently profitable, also — and hasn’t yet taken a dime of outside capital.

Emphasis on a few, though. Most vendors, weighed down by the costs of training and running cutting-edge AI models, have had to seek larger and larger tranches of capital to stay afloat. Case in point, Anthropic is said to be raising $750 million in a round that would bring its total raised to more than $8 billion.

Microsoft, together with its chip partners AMD and Intel, hopes that AI processing will increasingly move from expensive datacenters to local silicon, commoditizing AI in the process — and it might well right. Intel’s new lineup of consumer chips pack custom-designed cores for running AI. Plus, new datacenter chips like Microsoft’s own could make model training a less expensive endeavor than it is currently.

But there’s no guarantee. The real test will be seeing whether Windows users and enterprise customers, bombarded with what amounts to Copilot advertising, show an appetite for the tech — and shell out for it. If they don’t, it might not be long before Microsoft has to redesign the Windows keyboard once again.

Here are some other AI stories of note from the past few days:

- Copilot comes to mobile: In more Copilot news, Microsoft quietly brought Copilot clients to Android and iOS, along with iPadOS.

- GPT Store: OpenAI announced plans to launch a store for GPTs, custom apps based on its text-generating AI models (e.g. GPT-4), within the next week. The GPT Store was announced last year during OpenAI’s first annual developer conference, DevDay, but delayed in December — almost certainly due to the leadership shakeup that occurred in November just after the initial announcement.

- OpenAI shrinks reg risk: In other OpenAI news, the startup’s looking to shrink its regulatory risk in the EU by funneling much of its overseas business through an Irish entity. Natasha writes that the move will reduce the ability of some privacy watchdogs in the bloc to unilaterally act on concerns.

- Training robots: Google’s DeepMind Robotics team is exploring ways to give robots a better understanding of precisely what it is we humans want out of them, Brian writes. The team’s new system can manage a fleet of robots working in tandem and suggest tasks that can be accomplished by the robots’ hardware.

- Intel’s new company: Intel is spinning out a new platform company, Articul8 AI, with the backing of Boca Raton, Florida–based asset manager and investor DigitalBridge. As an Intel spokesperson explains, Articul8’s platform “delivers AI capabilities that keep customer data, training and inference within the enterprise security perimeter” — an appealing prospect for customers in highly regulated industries like healthcare and financial services.

- Dark fishing industry, exposed: Satellite imagery and machine learning offer a new, far more detailed look at the maritime industry, specifically the number and activities of fishing and transport ships at sea. Turns out there are way more of them than publicly available data would suggest — a fact revealed by new research published in Nature from a team at Global Fishing Watch and multiple collaborating universities.

- AI-powered search: Perplexity AI, a platform applying AI to web searching, raised $73.6 million in a funding round valuing the company at $520 million. Unlike traditional search engines, Perplexity offers a chatbot-like interface that allows users to ask questions in natural language (e.g. “Do we burn calories while sleeping?,” “What’s the least visited country?,” and so on).

- Clinical notes, written automatically: In more funding news, Paris-based startup Nabla raised a cool $24 million. The company, which has a partnership with Permanente Medical Group, a division of U.S. healthcare giant Kaiser Permanente, is working on an “AI copilot” for doctors and other clinical staff that automatically takes notes and writes medical reports.

More machine learnings

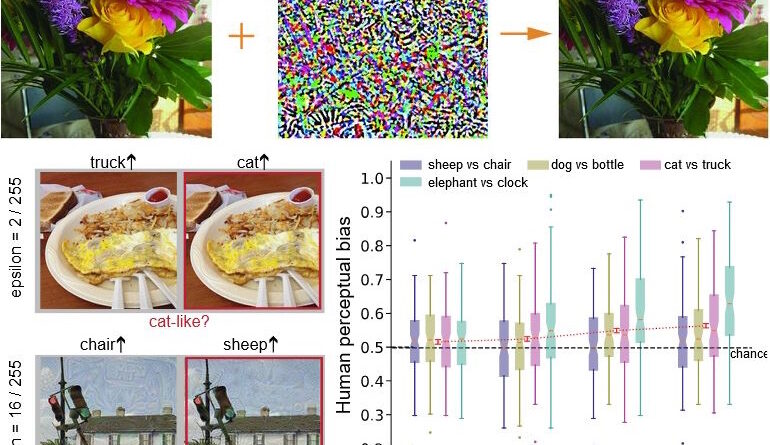

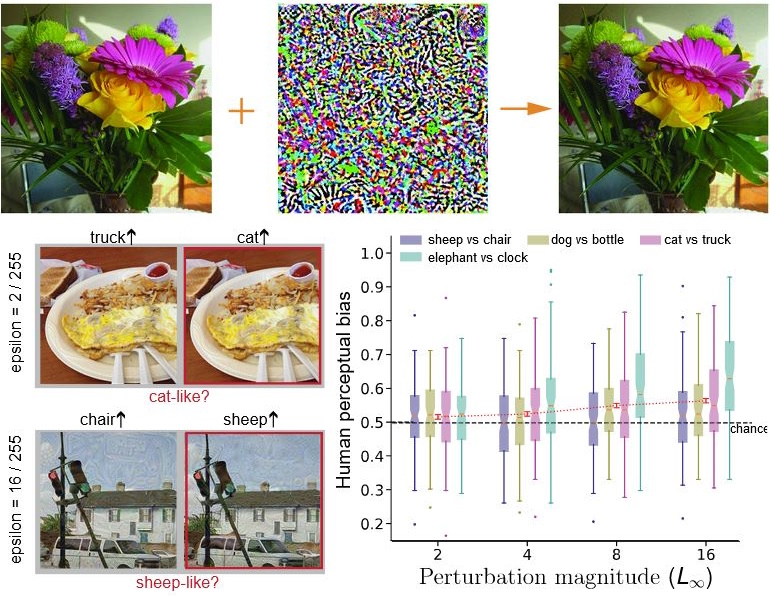

You may remember various examples of interesting work over the last year involving making minor changes to images that cause machine learning models to mistake, for instance, a picture of a dog for a picture of a car. They do this by adding “perturbations,” minor changes to the pixels of the image, in a pattern that only the model can perceive. Or at least they thought only the model could perceive it.

An experiment by Google DeepMind researchers showed that when a picture of flowers was perturbed to appear more catlike to AI, people were more likely to describe that image as more catlike despite its definitely not looking any more like a cat. Same for other common objects like trucks and chairs.

Image Credits: Google DeepMind

Why? How? The researchers don’t really know, and the participants all felt like they were just choosing randomly (indeed the influence is, while reliable, scarcely above chance). It seems we’re just more perceptive than we think — but this also has implications on safety and other measures, since it suggests that subliminal signals could indeed propagate through imagery without anyone noticing.

Another interesting experiment involving human perception came out of MIT this week, which used machine learning to help elucidate a particular system of language understanding. Basically some simple sentences, like “I walked to the beach,” barely take any brain power to decode, while complex or confusing ones like “in whose aristocratic system it effects a dismal revolution” produce more and broader activation, as measured by fMRI.

The team compared the activation readings of humans reading a variety of such sentences with how the same sentences activated the equivalent of cortical areas in a large language model. Then they made a second model that learned how the two activation patterns corresponded to one another. This model was able to predict for novel sentences whether they would be taxing on human cognition or not. It may sound a bit arcane, but it is definitely super interesting, trust me.

Whether machine learning can imitate human cognition in more complex areas, like interacting with computer interfaces, is still very much an open question. There’s lots of research, though, and it’s always worth taking a look at. This week we have SeeAct, a system from Ohio State researchers that works by laboriously grounding a LLM’s interpretations of possible actions in real-world examples.

Image Credits: Ohio State University

Basically you can ask a system like GPT-4V to create a reservation on a site, and it will get what its task is and that it needs to click the “make reservation” button, but it doesn’t really know how to do that. By improving how it perceives interfaces with explicit labels and world knowledge, it can do lots better, even if it still only succeeds a fraction of the time. These agent models have a long way to go, but expect a lot of big claims this year anyway! I just heard some today.

Next, check out this interesting solution to a problem I had no idea existed but which makes perfect sense. Autonomous ships are a promising area of automation, but when the sea is angry it is difficult to make sure they’re on track. GPS and gyros don’t cut it, and visibility can be poor too — but more importantly, the systems governing them aren’t too sophisticated. So they can go wildly off target or waste fuel going on large detours if they don’t know any better, a big problem if you’re on battery power. I never even thought about that!

Korea’s Maritime and Ocean University (another thing I learned about today) proposes a more powerful pathfinding model built on simulating ship movements in a computational fluid dynamics model. They propose that this better understanding of wave action and its effect on hulls and propulsion could seriously improve the efficiency and safety of autonomous marine transport. It might even make sense to use in human-guided vessels whose captains aren’t quite sure what the best angle of attack is for a given squall or wave form!

Last, if you want a good recap of last year’s big advances in computer science, which in 2023 overlapped massively with ML research, check out Quanta’s excellent review.

[ad_2]

Source link